Best Theoretical Lap

The best theoretical lap is used in both the calculation of the "Time Slip" and "Rate of Time Slip", as well as in the summary statistics. The best theoretical lap is calculated by taking the fastest "bits" of all the data currently loaded in to the program to get a single best possible lap that you could have driven. Note that whilst the "best theoretical lap" is used to calculate internal results, it is not possible to look at the data directly. However since the data is only an ensemble of parts of other runs, it is of course possible to look at the component parts if required.

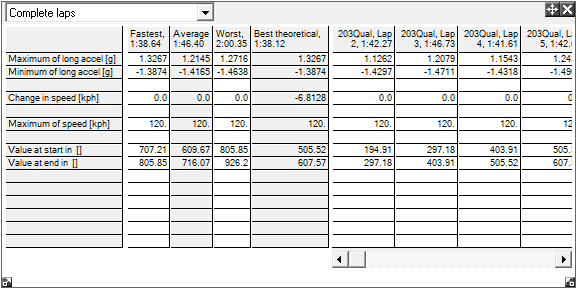

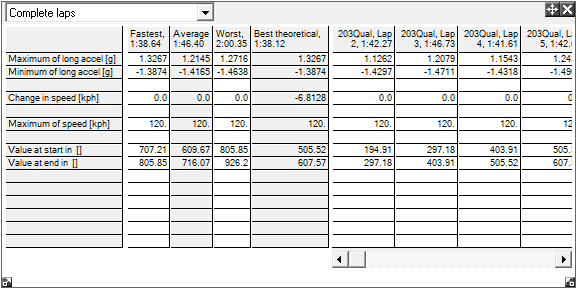

Within the software each lap is cut up every time you add another track marker to the track map. If only a lap marker is added and no track markers, then your best theoretical lap would simply be the fastest whole lap that you drove. If you add a single additional track marker then the lap would be cut into 2 sections, one section will go from the lap marker to the new track marker and one section will run from the track marker back to the lap marker. Similarly if you add another 10 track markers, the software will divide the track into 11 sections and so on. To view the best theoretical lap, open the Summary Statistics window, and select "Complete Laps" from the drop down menu on the top left of the window:

There are limitations of the "best theoretical lap" system, for example if you drive into a corner too fast you will be forced to take a poor line and your exit speed will be low. However the software may take the entry speed from one lap and the exit from the other resulting in a fast but technically impossible corner. Another limitation occurs with very closely spaced lap markers - the accuracy of the track maps is a few meters, so if you say added 50 lap markers to a short circuit you may find that you start to get unrealistically fast timings due to an accumulation of small errors in the data.